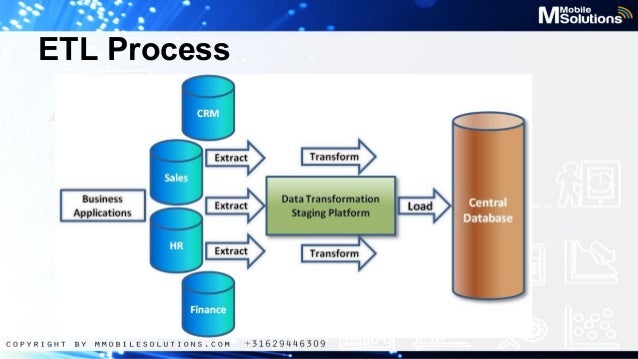

There are various types of keys such as primary, foreign, alternate, composite, and surrogate keys. Step 2- Transformation: The major chunk of effort is spent on making keys. Step 1- Extraction: The relevant data is extracted from the source system. ETL has become a global hit especially after the dependence of enterprises to use business intelligence tools to make reports, analyses, and insights. To maintain any e-commerce or digital business, the best way is to store the data in data warehouses which can combine data from various sources and can maintain them in a uniform and compatible structure using ETL, as ETL can make dissimilar data into similar data, this is what is called transformation. More recently, text files, legacy system files, and spreadsheets can also be handled using ETL. The extraction process occurs on an OLTP database, and then the transformation is done to match the schema of the data warehouse.

To understand ETL, we need to understand the process of data loading from source to data warehouse. These three operations are performed on data. Teradata Data Validation – Validate large data volumes stored, processed and validated in Teradata systems.ĮTL is the abbreviation of Extract-Transform-Load.SAP Data Validation – Validate data used in business logic and reporting, and test data integration, export and import processes.WhereScape Test Automation – Automate test tasks for data warehouses created with WhereScape and perform health checks of the WhereScape model.Postal Address Validation – Geocoding address data and comparing it with a reliable source, such as a postal service database, to ensure its accuracy and existence.DataOps Automation – BiG EVAL assists with automated testing and validation of data, enhancing efficiency and reliability in DataOps.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed